Your smartphone is not really smart

Pick up your phone. It looks like a slab of glass with delusions of grandeur. It opens apps, takes photos, streams music, argues with the cloud, and occasionally restarts at the worst possible moment because software updates have a gift for bad timing.

Underneath all that polish, your phone is not thinking in the human sense. It is moving through physical states: high or low, present or absent, off or on. The language is blunt because the machine needs it to be blunt.

That is the part worth paying attention to. A light switch can flip between two states, and yet that same kind of physical choice became the foundation for numbers, logic, software, the internet, and eventually the AI tools now wandering around the internet with alarming confidence.

What binary actually means

Binary often gets treated like a secret computer language, but the basic idea is almost annoyingly ordinary. It is a way of representing information using two possible states.

A bit is the smallest unit of information we can work with. Think of it as one choice between two possibilities. A coin toss can carry that kind of information. So can a yes-or-no answer, a punched card with a hole or no hole, or a switch that is either off or on.

One switch does not give you much room to work with. Add more switches, though, and the combinations begin to multiply. With enough of those clean little choices, machines can represent numbers, text, sound, images, instructions, and decisions.

Before computers were machines, computers were people

Before computers were machines, “computer” was a job title. Human computers prepared mathematical tables and carried out calculations for navigation, engineering, astronomy, census work, artillery, and any other problem that demanded precision from a tired human being with paper in front of them.

The people doing this work were not stupid. Many were remarkably skilled. The problem was the job itself. It demanded patience, accuracy, and the emotional stability of someone who could stare at numbers all day without their soul quietly packing a bag and leaving.

One copied digit in the wrong place, one skipped step, one tired mind, and the answer could wander off into the bushes wearing a fake moustache.

That was the deeper problem. Calculation was trapped inside human attention, and human attention is fragile. Machines offered a way to move procedures out of people and into physical systems that could repeat them reliably.

Why binary won

Humans count in tens because we have ten fingers and history apparently decided fingers were a user interface. Circuits, unfortunately for our sense of elegance, do not care what feels natural to us.

Electricity does not like being subtle. A decimal electrical system would ask a machine to recognise ten delicate shades of signal. That is like trying to judge the brightness of a candle in a nightclub while the fog machine is choking the room and the strobe lights are interrogating your retinas.

Binary avoids that nonsense by refusing to be delicate. It gives the machine two broad camps: off or on, dark or bright, low or high. The machine does not need to interpret a whole spectrum of fragile meanings. It only needs to recognise two reliable states.

Binary did not win because it looked clever on a chalkboard. It won because the physical world could handle it.

The cost of binary is translation

Binary is reliable because it is narrow. That narrowness comes with a price.

Human reality does not arrive neatly labelled. We have language, music, images, context, uncertainty, judgement, exceptions, office politics, and decisions that only made sense because someone skipped breakfast.

Machines do not naturally understand “sort of.” They do not naturally understand “maybe.” They need structure, rules, symbols, and data they can process.

Anyone who has built software knows the code is rarely the first problem. The first problem is getting the messy human situation to sit still long enough to be described. Only then can it be turned into something the machine can survive.

Who was Claude Shannon?

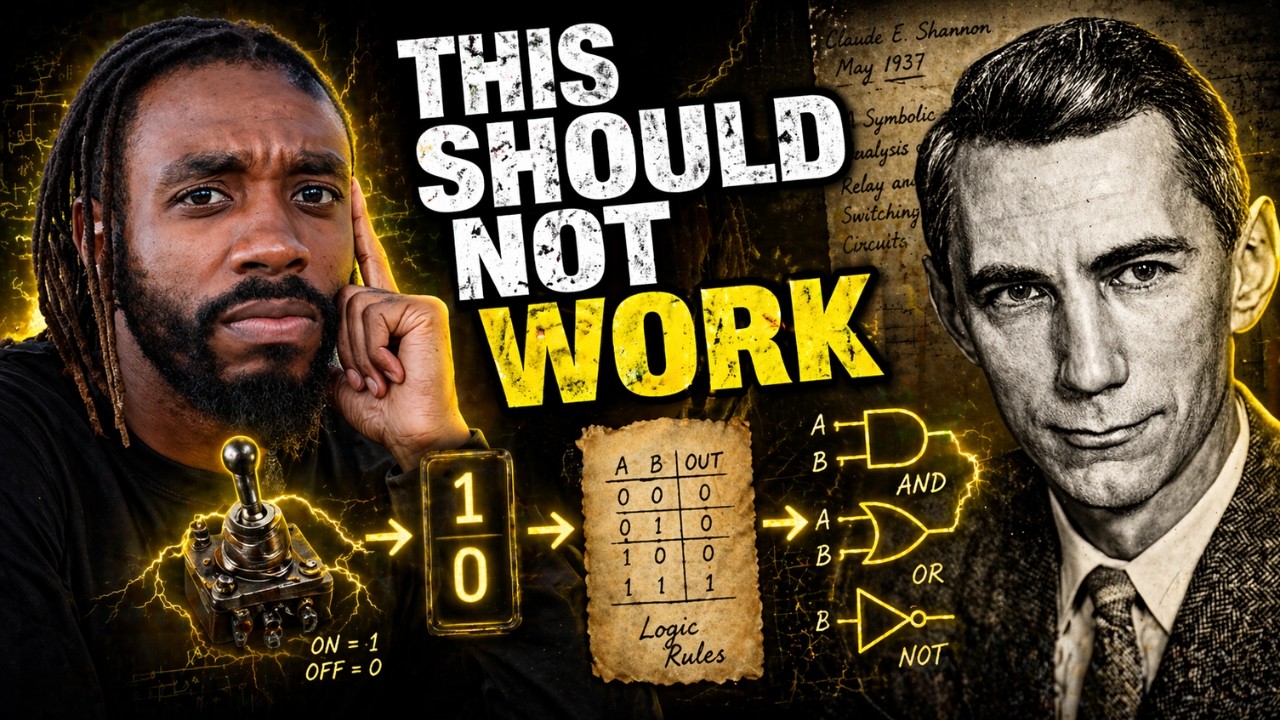

Claude Shannon is one of those names that should be far more famous than it is. He was an American mathematician and electrical engineer, and his work sits quietly underneath the digital world like foundation concrete. Most people never see it. They just live on top of it.

Shannon helped shape the foundations of digital computing and information theory. His crucial early breakthrough came in 1937, while he was doing his master’s work at MIT.

Before Shannon, logic and circuits looked like separate worlds. One belonged to mathematics and reasoning. The other belonged to engineering and machinery. Shannon’s genius was seeing that these worlds were closer than they looked.

The breakthrough: logic could be wired

Mathematicians had Boolean algebra, a formal way of dealing with true and false. Engineers had switches, relays, circuits, and signals. Shannon looked at both and noticed the hidden connection.

Electrical switching circuits could represent logical operations. In plain English, logic did not have to stay on paper. You could wire it.

That idea did not produce the modern computer overnight, but it gave engineers a map. And in technology, a good map can be more dangerous than a finished machine.

A machine could now follow logic using physical parts. The circuit did not understand the rule, admire the rule, or pause to marvel at its own cognitive acuity. It simply behaved according to the rule.

Why this still matters in the age of AI

Today, people talk about software, apps, cloud computing, and AI as if they are floating somewhere above ordinary reality like digital incense. The interface feels smooth enough to make the machinery disappear, which is exactly why the machinery is worth remembering.

Under the surface, the glamour collapses into something more ordinary: states, signals, rules, and layers of engineering stacked until the ordinary starts looking miraculous.

A modern computer is not a brain in the poetic sense. It follows rules. Its power comes from how many rules it can follow, how quickly it can follow them, and how many layers of abstraction engineers can build above that foundation.

Yes and no becomes arithmetic. Arithmetic becomes logic. Logic becomes software. Software becomes the world we now live inside.

Final takeaway

The point is not that computers are simple. They are not. The point is that their complexity grew from a foundation simple enough for the physical world to trust.

Binary gave machines clean states. Switches gave those states a body. Shannon showed that logic could live inside circuits, not just on paper.

Once that happened, computing stopped being only a human activity. It became a machine process: repeatable, scalable, and fast enough to reshape civilisation while most of us were still arguing with printers.

Someone looked at a switch and realised it could do more than turn on a light. It could carry a symbol. It could follow a rule. It could become logic.

And once logic entered the machine, the machine entered history.

About Clueless Pundit

Clueless Pundit is Takura Nyagumbo’s computer history channel, built around one stubborn belief: the technology shaping our lives did not fall out of the sky. It came from people, machines, constraints, accidents, arguments, and strange little breakthroughs that deserve to be debugged properly.